Type: Article -> Category: AI Security

Predicting Crime with AI

A Step Toward the Real-Life 'Minority Report'?

Publish Date: Last Updated: 26th February 2026

Author: nick smith- With the help of CHATGPT

The 2002 sci-fi thriller Minority Report painted a fascinating yet dystopian picture of a world where crime prevention took center stage. The concept revolved around a Pre-Crime Unit powered by PreCogs, specialized humans capable of predicting future crimes with startling accuracy. Tom Cruise’s character leveraged these predictions to thwart crimes before they occurred, raising provocative ethical questions about free will, privacy, and the justice system.

Fast forward to today, and while we don’t have PreCogs, we do have something just as groundbreaking—Artificial Intelligence (AI). What if AI, with its growing role in analyzing data and predicting outcomes, could make the fictional premise of Minority Report a reality? Let’s explore how AI is moving us closer to a future where crimes could be predicted—and prevented—before they happen.

How AI Is Reshaping Policing: From Assumptions to Measurable Reality

Jan 2026 Update

1. From Reactive Policing to Evidence-Driven Systems

Traditional policing and public policy have historically relied on:

- human intuition,

- political assumptions,

- retrospective reporting,

- and fragmented datasets.

AI changes this by enabling continuous, data-driven measurement of real-world behaviour across time and geography.

Modern policing AI systems do not predict individual intent. Instead, they operate at three technical levels:

- Pattern detection – identifying correlations across vast datasets

- Trend forecasting – detecting emerging crime waves early

- Policy evaluation – measuring whether interventions actually reduce harm

This represents a shift from belief-based governance to statistical accountability.

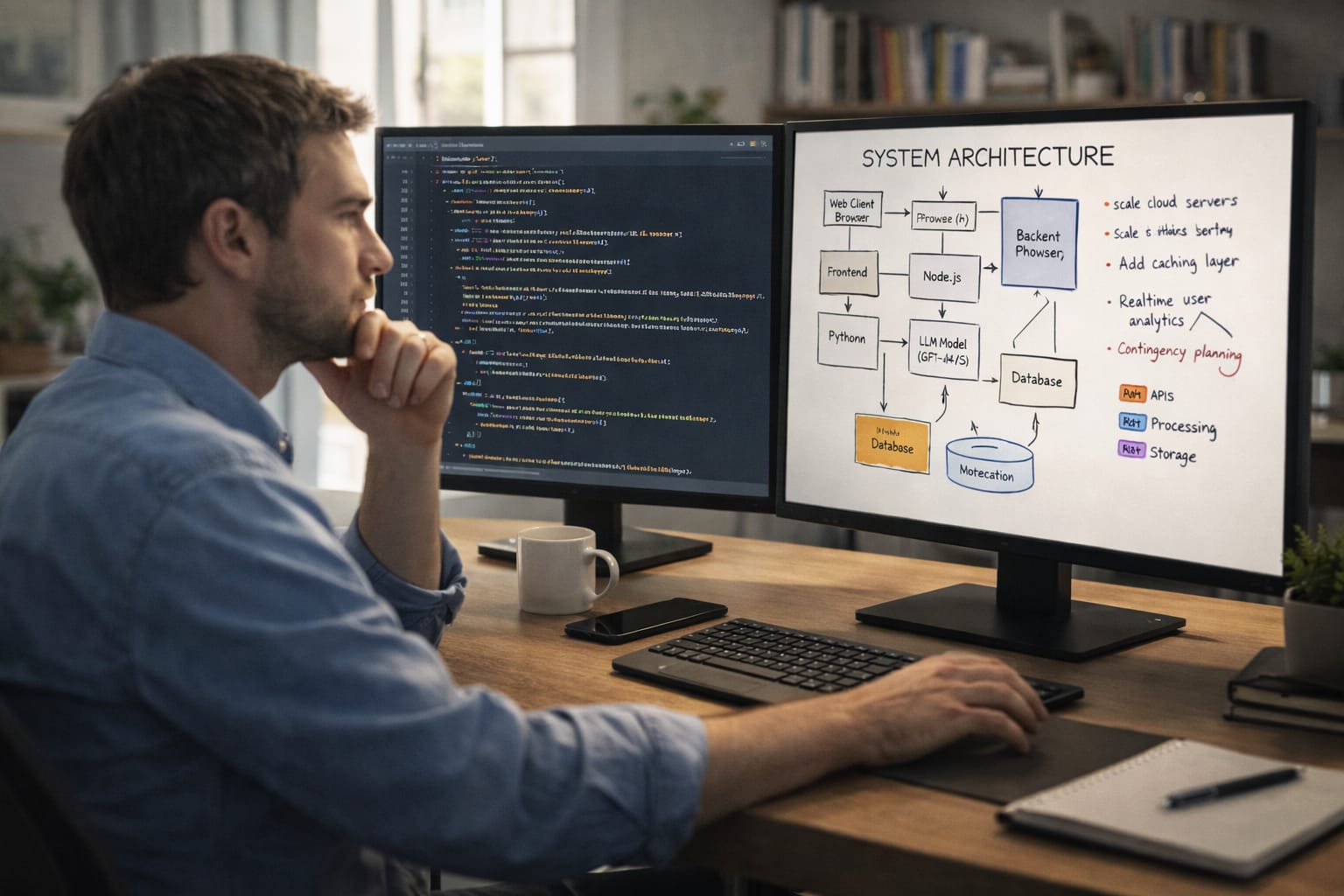

2. The Technical Core: How AI Actually “Predicts” Crime

2.1 What the models use (and what they don’t)

AI crime analytics systems typically ingest:

- Historical crime reports (time, location, type)

- Environmental data (lighting, transport hubs, events)

- Traffic flow and mobility patterns

- Socioeconomic indicators (aggregated, not personal)

- Weather and seasonal effects

- Anomaly signals from smart cameras and sensors

Crucially:

- They do not read thoughts

- They do not assign guilt

- They do not determine enforcement decisions autonomously

They produce probability distributions, not accusations.

2.2 Crime waves, not criminals

AI is most effective at identifying:

- temporal spikes (e.g. knife crime surges during specific weeks)

- geographic drift (crime moving from one area to another)

- copycat effects following high-profile incidents

- secondary effects of policy changes (e.g. displacement, not reduction)

This allows police and councils to ask better questions:

- Is this a genuine rise or a reporting artefact?

- Did this intervention reduce harm or merely shift it elsewhere?

- Are resources aligned with reality or outdated assumptions?

3. Smart Cameras, Roads, and Urban Sensing

3.1 AI-enabled road and surveillance systems

Modern “smart” cameras now function as real-time data sensors, not just recording devices.

They can detect:

- abnormal traffic patterns linked to organised crime

- stolen or cloned vehicle movement networks

- sudden congestion associated with criminal activity

- repeat location usage suggesting illegal operations

Importantly, these systems increasingly flag patterns, not people.

This enables:

- early warnings of organised crime logistics

- better modelling of crime infrastructure, not just incidents

- reduction of random or reactive stop-and-search tactics

4. AI as a Policy Auditor, Not a Moral Judge

4.1 Measuring what politicians prefer not to measure

One of AI’s most uncomfortable contributions is its ability to quantify outcomes rather than intentions.

AI systems can evaluate:

- whether increased policing actually reduces crime

- whether housing policy correlates with theft, violence, or stability

- whether welfare changes precede measurable social stress

- whether “tough on crime” rhetoric produces statistical improvement

This removes plausible deniability.

AI does not care about:

- party ideology

- press narratives

- electoral cycles

It measures what happens next.

4.2 Bias vs exposure

Much criticism claims AI “creates bias”. In reality, AI often reveals bias already embedded in society.

Examples:

- Disproportionate policing reflects disproportionate enforcement history

- Crime concentration reflects economic deprivation patterns

- Recidivism correlations expose systemic failure, not individual morality

The uncomfortable truth:

AI does not invent inequality — it quantifies it.

The political challenge is not AI’s accuracy, but whether leaders are willing to confront what the data shows.

5. Beyond Policing: Fraud, Abuse, and State Accountability

5.1 Benefit and welfare fraud detection

AI excels at identifying:

- anomalous claim behaviour

- repeated patterns across non-connected claimants

- sudden shifts in claims correlated with policy changes

- organised exploitation rather than individual error

Properly deployed, this:

- reduces blanket suspicion of claimants

- targets organised abuse rather than vulnerable individuals

- improves fairness through consistency

5.2 Housing and government contract fraud

AI systems are increasingly capable of:

- detecting shell company networks

- identifying bid-rigging patterns

- spotting inflated pricing trends

- mapping connections between contractors and repeated awards

Ironically, this is where AI adoption often slows.

Why?

Because AI scrutiny scales upwards as effectively as it scales downwards.

6. Ethics Reframed: The Real Question Isn’t Surveillance

The ethical debate is often misframed as:

“Should AI watch people?”

The more accurate question is:

“Who gets measured, and who doesn’t?”

AI raises difficult but necessary issues:

- Should government decisions be immune from statistical evaluation?

- Should ideology override measurable harm?

- Should transparency apply only to citizens, not institutions?

Ethical AI policing is not about pretending data doesn’t exist —

it is about ensuring that data is used symmetrically and responsibly.

7. The Real Shift: From Narrative to Feedback Loops

AI introduces something historically rare in governance:

continuous feedback loops.

Instead of:

- policy → headlines → denial

We get:

- policy → data → outcomes → adjustment

This does not eliminate human judgement —

it forces it to confront evidence.

8. Conclusion: AI as a Mirror, Not a Master

AI is not reshaping policing by predicting criminals.

It is reshaping policing by:

- replacing assumptions with measurement

- replacing ideology with feedback

- replacing reactive force with strategic prevention

The resistance to AI in this space is often less about ethics and more about loss of narrative control.

Because once outcomes are measured at scale,

the question is no longer “what did we intend?”

but “what actually happened?”

And AI remembers the answer.

AI Use in Policing & fraud Detection

How to Stop Fraud with AI Security Controls | Steve Winterfeld, Akamai

YouTube Channel: TFiR

Police Are Now Using AI To Predict Crime! w/ Dr. Calvin Lawrence | Perspektives With Bank

YouTube Channel: THE BIG FACTS NETWORK

AI's Global Face Recognition: The Future is Here? #shorts

YouTube Channel: Brian Sanford

New 4D AI Cameras on UK Roads – What They Can See Will Shock You

YouTube Channel: Brown Car Guy

New security cameras with AI will help the city fight crime

YouTube Channel: WKBW TV | Buffalo, NY

The UK Develops AI "Murder Prediction" Tool | Vantage with Palki Sharma | N18G

YouTube Channel: Firstpost

A.I. policing, youth programs credited for reduced crime rate in Macon

YouTube Channel: 41NBC

Predictive Policing: Revolutionizing Crime Prevention Through Data

YouTube Channel: VARINDIA

Latest AI Security Articles

AI Questions and Answers section for Predicting Crime with AI: A Step Toward the Real-Life 'Minority Report'?

Welcome to a new feature where you can interact with our AI called Jeannie. You can ask her anything relating to this article. If this feature is available, you should see a small genie lamp above this text. Click on the lamp to start a chat or view the following questions that Jeannie has answered relating to Predicting Crime with AI: A Step Toward the Real-Life 'Minority Report'?.

Visitor asked: How can AI help in child protection

Jeannie’s answer to the question: AI can significantly contribute to child protection in many ways. For instance, AI can help in predictive policing by analyzing data and identifying potential threats to children in certain areas. It can also assist in recognizing patterns and behavior that may indicate child exploitation or abuse. Artificial Intelligence can be used to analyze online content in real-time and identify harmful situations such as cyberbullying or grooming. Moreover, it can contribute to the early identification of child trafficking by analyzing patterns and generating alerts on potential risks. Therefore, AI represents a powerful tool in enhancing child protection efforts.

Date: 2025-02-06

Type: Article -> Category: AI Security